The current state of generative media is characterized by a strange paradox: it has never been easier to produce an image, yet it has rarely been more difficult to produce a specific, publish-ready asset. For content teams, the “magic” of a one-click generation wears off the moment a hand has six fingers or a product color is slightly off-brand. This is the last-mile problem of AI content production. Most tools are built for the initial spark—the generation—but they fail during the refinement stage where professional work actually happens.

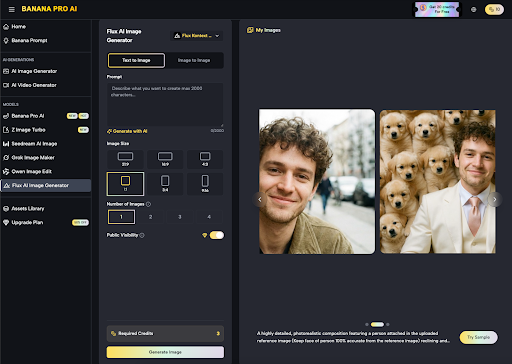

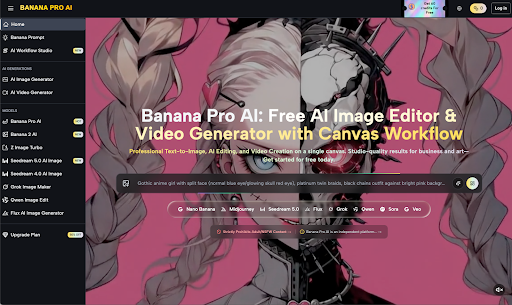

Moving between a dedicated generator, a separate upscaler, and a legacy photo editor creates a fragmented workflow that eats into the time savings AI was supposed to provide. To solve this, teams are shifting toward platforms that treat the creative process as a continuous pipeline rather than a series of isolated events. This shift is evident in the way operators are utilizing integrated environments like Banana Pro to bridge the gap between a raw prompt and a final, usable file.

The Friction of Fragmented Tooling

In a standard marketing workflow, the generation of an image is usually only 20% of the total effort. The remaining 80% involves adjusting composition, correcting artifacts, and ensuring the asset fits the technical requirements of the destination platform. When a team uses a standalone model, they often find themselves stuck in a loop of “prompt engineering” to fix minor details that could be solved in seconds with a brush or a localized edit.

This is where the AI Image Editor becomes a critical part of the stack. Instead of asking the model to regenerate the entire image to change a background or fix a lighting issue, editors allow for granular control. This “canvas-first” mentality acknowledges that AI is a collaborator, not a replacement for the director’s eye. It also highlights a current limitation in the industry: while generative models are becoming more powerful, their internal logic remains a black box. We cannot yet precisely communicate complex spatial relationships through text alone, which makes manual intervention via an editor an absolute necessity for professional output.

Operationalizing Nano Banana Pro

For creators managing high-volume output, the focus has shifted from “which model is the best” to “which workflow is the fastest.” The Nano Banana Pro framework is designed around this realization. It isn’t just about accessing a specific model like Seedream 5.0 or Z Image Turbo; it’s about how these models interact within a single interface.

When a team is tasked with creating a series of social media assets, they might start with a base generation in Nano Banana to establish the aesthetic. From there, the asset moves into a transformation phase. This is where the operator might apply an image-to-image process to maintain character consistency across multiple frames. Without this integrated flow, the risk of “style drift” becomes a major bottleneck, forcing teams to discard half of their generations.

The Transition from Static to Motion

The leap from static imagery to video is perhaps the most difficult hurdle in the current creative landscape. Video generation tools like Seedance 2.0 have significantly lowered the barrier to entry, but they introduce a new set of uncertainties. Temporal consistency—ensuring that an object looks the same from second one to second five—is still a work in progress across the entire AI sector.

A content team using Banana AI often has to adopt a “generate and prune” strategy. You generate several iterations of a clip, identify the one with the least amount of morphing, and then use traditional editing techniques to mask the imperfections. It is important to reset expectations here: we are not yet at a point where an AI can generate a perfect 30-second commercial in one go. The most effective use cases right now involve short, 2-to-4-second “hero” clips that are stitched together with high-quality static assets.

Practical Use Cases: Where the Value Realizes

To understand where these tools fit, we have to look at the specific production tiers common in modern marketing:

High-Frequency Social Assets

In this scenario, speed is the primary metric. Using Nano Banana for rapid prototyping allows a creator to test ten different visual concepts in the time it used to take to find one stock photo. Because the quality of modern models like Banana Pro is high enough for mobile screens, the “good enough” threshold is reached almost instantly. The value lies in the ability to iterate based on performance data rather than creative intuition alone.

Localized Campaign Creative

Global teams often face the challenge of localizing visual content for different regions. An integrated workflow allows an editor to take a master shot and use in-painting or style transfer to adjust the background elements, clothing, or color palettes to better suit a specific market. This prevents the need for multiple expensive photoshoots and ensures a baseline of brand consistency that is difficult to maintain with disconnected tools.

Rapid Prototyping for Video

Before committing to a full production budget, creative leads are using video generators to create “AI-mations” or moving storyboards. This provides a much clearer sense of timing and mood than a static deck. While the final output might still be filmed traditionally, the use of a workflow studio to visualize the concept saves days of pre-production meetings.

The Reality of Model Selection

One of the mistakes many content teams make is over-relying on a single model for every task. In practice, different engines have different “personalities.” A model optimized for speed, like Z Image Turbo, is excellent for brainstorming and internal mockups where latency is the enemy. However, when it comes to a high-fidelity print ad or a hero image for a landing page, a more computationally heavy model like Seedream 5.0 is usually preferred for its better handling of fine textures and complex lighting.

The Nano Banana Pro ecosystem works because it doesn’t force a choice between these extremes. It provides the canvas where these different models can be deployed as needed. However, an honest assessment reveals a lingering frustration for many users: the learning curve. Even with a simplified UI, understanding the nuances of how an image-to-image strength setting affects the final output requires significant trial and error. There is a “feel” to these tools that only comes with hours of operation, making the human element—the operator—the most important part of the equation.

Designing the Future Workflow

The goal for any content team should be to build a “repeatable asset pipeline.” This means moving away from the novelty of AI and toward a systematic approach to creation. If you are using an editor, you should be using it to solve specific problems: removing a watermark, expanding a frame (out-painting), or swapping a product.

We must also be cautious about the hype surrounding “fully autonomous” creative. Content that is generated without a human-in-the-loop often feels hollow or, worse, uncanny. The most successful teams treat AI as a high-powered production assistant. They use the platform to handle the repetitive, labor-intensive tasks—like rotoscoping a video or generating 50 variations of a background—while the creative lead focuses on the narrative and the emotional resonance of the piece.

The Skill Shift for Modern Creators

As these tools become more integrated, the necessary skill set for creators is shifting. We are moving away from a world where technical proficiency in complex software was the primary gatekeeper to creative output. Instead, the new gatekeepers are those who understand visual composition, art direction, and the ability to “curate” at scale.

Knowing how to use an image-to-image workflow to maintain a brand’s color story across a hundred generated assets is more valuable than being able to draw those assets from scratch. This isn’t a devaluation of traditional skills, but a pivot in how those skills are applied. The artist is now the director of an infinitely fast production house.

Final Considerations for Content Teams

When evaluating a platform, teams should look past the gallery of “perfect” images on the landing page. Instead, they should test how the tool handles the messy reality of editing. How easy is it to undo a specific change? Can you move an asset from the image generator to the video generator without losing the metadata?

The industry is still in a phase of rapid evolution, and what works today might be superseded by a new architecture in six months. This uncertainty is a feature of the landscape, not a bug. The teams that thrive will be those that don’t marry themselves to a single model but instead master a flexible workflow that can adapt as the underlying technology improves.

In the end, the success of AI in a professional environment isn’t measured by the “wow” factor of a single prompt. It is measured by the reduction in friction between a concept and a finished file. By focusing on the last-mile problems—editing, consistency, and integration—content teams can finally turn the promise of generative AI into a sustainable production reality. Whether it is through the rapid iterations possible in Nano Banana or the deep refinement allowed by a specialized editor, the goal remains the same: getting the work out the door with as little wasted motion as possible.